DURATION

12 months

(June 2021 - June 2022)

MY ROLE

UX Researcher

TEAM MEMBERS

Ather Sharif

Jaden Wang

SKILLS LEARNED

Wizard of Oz Study

Data Analysis

Information scrapping

TOOLS

Zoom

Excel

Otter.ai

Voxlens allowed me to make meaningful tangible impact for underrepresented visually impaired users.

Engaging in user-centered research, I had the opportunity to uncover and address the unique challenges these users face when navigating data visualizations.

The project led by Ather Sharif not only challenged me to think creatively but also allowed me to use inclusive design principles that enhanced the accessibility and usability of the product. I was able to collaborate closely with developers, designers, and end-users, which deepened my understanding of the technical and empathetic nuances essential in accessible design.

Contributing to a project with a positive inclusive purpose was immensely rewarding and reinforced my passion for creating experiences that are universally accessible.

Data Visualizations are difficult to navigate for screen reader users.

According to findings from Sharif et al., due to the inaccessibility of visualizations, screen-reader users extract information 62% less accurately and spend 211% more interaction time with online data visualizations compared to non-screen-reader users

Voxlens offers a multi-modal approach

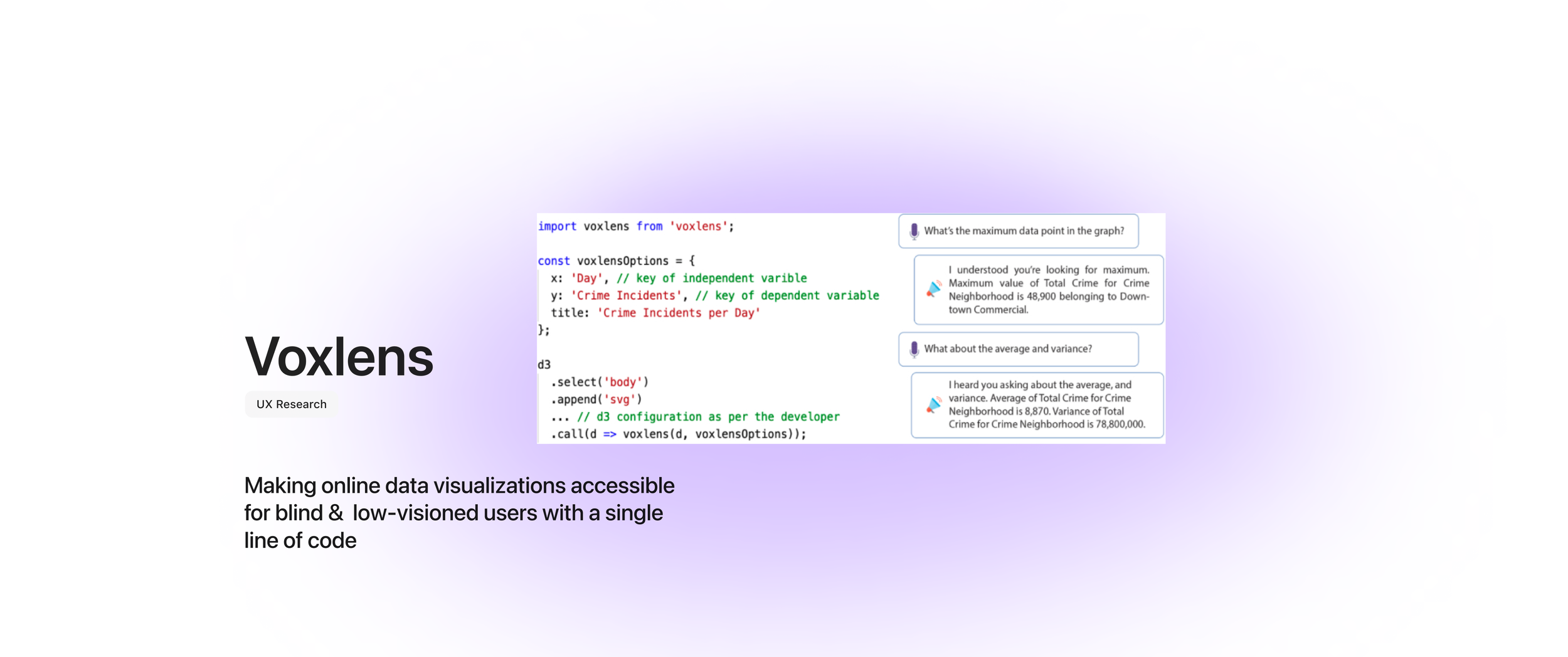

Here’s what you can do with a single line of code

How Modes Came to Be

The 3 main features listed above were iteratively built by seeking feedback from screen-reader users through our Wizard-of-Oz studies and identify areas of improvement for the VoxLens features.

Wizard of Oz Study - a user testing method where participants interact with a seemingly autonomous interface, however in reality, a human operator (the wizard) behind the scenes controls the system responses, simulating the functionality of a not-yet-built product.

In our studies, we, the “wizards,” simulated the auditory responses from a hypothetical screen reader. 5 participants interacted with all of the VoxLens modes and were interviewed in a semi-structured manner with open prompts at the end of each of their interactions.

Specifically, we asked them to identify the features that they liked and the areas of improvement for each mode.

Our Wizard-of-Oz studies revealed that participants liked clear instructions and responses, integration with the user's screen reader, and the ability to query by specific terminologies. They specified that having an

Interactive tutorial to become familiar with the tool

A help menu to determine which commands are supported

The ability to include the user's query in the response as key areas of improvement.

Therefore, after recognizing the commands and processing their respective responses, VoxLens delivers a single combined response to the user via their screen readers. This approach enables screen-reader users to get a response to multiple commands as one single response.

VoxLens is an accessibility tool designed to help visually impaired users interpret data visualizations which are often difficult to access without sight.

Through task-based experiments with 21 screen-reader users, we show that VoxLens improves the accuracy of information extraction and interaction time by 122% and 36%, respectively, over existing conventional interaction with online data visualizations.

“Our interviews with screen-reader users suggest that VoxLens is a “game-changer” in making online data visualizations accessible to screen-reader users, saving them time and effort.”

Accessibility enhancement is especially valuable towards educational, professional, and research settings where data visualization is a critical part of information sharing and decision-making.

But What Makes Sonification User-Friendly?

Sonification is a main feature of Voxlens that aims to assists people who use screen readers (for e.g., blind and people with low-vision) to understand the "ups-and-downs" of the data such as trends in a temporal data set.

In this preliminary exploration, we investigated the usability and user-friendliness of data visualization sonification for screen-reader users.

Specifically, we evaluated the Pleasantness, Clarity, Confidence, and Overall Score of discrete and continuous sonified responses generated using various oscillator waveforms and synthesizers through user studies with 10 screen-reader users

Our results show that screen-reader users preferred distinct non-continuous responses generated using oscillators with square waveforms.

Currently, VoxLens supports only two-dimensional data visualizations with a single data series and is fully functional exclusively on Google Chrome due to the Web Speech API's limited browser compatibility. Future work could expand VoxLens to handle n-dimensional data visualizations with multiple series, explore cross-browser alternatives for speech recognition, and enhance functionality based on these findings.

Participants identified three key areas for improving sonification:

Personalization: customizing auditory output such as speed and frequency

Identification of extrema/outliers: highlighting maximum and minimum data points

Multimodality: combining different instrument sounds and frequencies to better distinguish data points

Enhancing VoxLens with centralized configuration management could allow screen-reader users to specify preferences for personalized trend-aware responses based on data complexity. Our findings also point to integrating insights from fields like Music Theory to improve sonified responses, particularly for users relying on auditory channels. This interdisciplinary approach holds promise for advancing the accessibility, usability, and user-friendliness of auditory data visualizations.

Final Note

We hope that by open-sourcing our code for VoxLens and our sonification solution, our work will inspire developers and visualization creators to continually improve the accessibility of online data visualizations.

We hope researchers will conduct an in-depth investigation of the usability and user-friendliness of sonified responses and improve the experiences of screen-reader users with the sonification of online data visualizations.

Overall, we hope that our work will motivate and guide future research in making data visualizations accessible.